Sense and Sensitivity

In Jane Austen’s Sense and Sensibility, a secret engagement is a major plot point. The protagonist Elinor Dashwood is told the secret in chapter 22, and has much pain keeping it until chapter 37 when she can finally tell her sister Marianne:

“For four months, Marianne, I have had all this hanging on my mind, without being at liberty to speak of it to a single creature; knowing that it would make you and my mother most unhappy whenever it were explained to you, yet unable to prepare you for it in the least. It was told me,—it was in a manner forced on me by the very person herself, whose prior engagement ruined all my prospects…”

Elinor’s torment only makes sense if the reader absorbs the sensitivity of a secret engagement. Many readers bounce off Regency novels due to having little engagement with sensibilities like these.

I use this example not just for the pun in the title of this piece (ok, 80% for the pun) but to illustrate that all personal data can be sensitive. We can’t decide that an engagement, just because it’s usually announced happily, is a non-sensitive piece of data. The history of startups and tech platforms shows that we’re terrible at recognizing this. Many companies have blithely revealed information about women to their stalkers. My first pregnancy, though a secret to everybody I knew due to miscarriage, was not a secret to advertisers online because my search terms were shared. Companies cannot make these decisions for people.

We’ve accepted privacy principles of “protect all data” well in some areas. It’s nearly required for personal data in transit, as we’ve built the expectation for TLS everywhere. “There is no such thing as non-sensitive web traffic”, says https://https.cio.gov/everything/ , and thus all Web traffic should be encrypted.

—

When we make the case for data portability, it is tempting to forget all this. When we ask companies to make data portability a user right, yet companies have very short lists of allowed destinations due to security barriers, it’s tempting to ask them to dismantle those barriers entirely.

Companies have legal and reputational liability when they let users share personal data via platform features. Companies are well aware of the many opportunities for fraud. For example, users convinced they’re sharing data with a reliable company may be fooled by an impersonation attack. And so companies do what companies do, and build complex protective systems of service identification, data protections, security reviews, OAuth scopes and sensitivity levels.

Those systems are:

- Very expensive to companies for both platforms and companies applying for access,

- Significant barriers to users actually porting their data,

- Often wrong about sensitivity level,

- Inconsistent internal to a platform,

- VERY inconsistent across the industry

Sensitivity levels are a data classification framework - broad groupings of kinds of data, linked to protection levels. It’s understandable that companies would try this approach for personal data, as companies already use this kind of framework for corporate data. A company can decide that its customer prospect list is “restricted” and its employee foosball chat is merely “private”. A company can decide that vendor X is secure enough to work with restricted data and vendor Y is secure enough to serve employee chat rooms. But does the same model work when companies apply it to personal data?

First, it’s much harder for companies to apply sensitivity levels to all personal data. A major recording artist’s music listening activity is more sensitive than my photos of my kids. My photos are more sensitive than my friend Jamie’s blood-glucose data (because he’s already donated it as a public research dataset). Any reasonable sensitivity classification of listen history, photos and medical data would reverse this ordering. Second, it always seems safer to put data in a more secure category. If some photo albums contain images of passports and drivers’ licenses, then all albums are considered the most sensitive. If some email folders contain password reset links, then all email folders are the most sensitive.

As a result, users are burdened by high protections. When a company forces users to protect their data too carefully, they take away choice and incentivize workarounds.

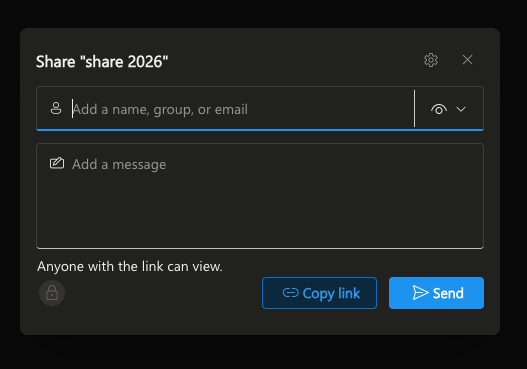

The workarounds are part of why a platform can have inconsistent security protections. Many photo sites, for example, have short lists of trusted partners who can access personal data APIs and do data transfers. On the big platforms, those partners are formally and consistently vetted to make sure they are trustworthy. However, because people do want to share their photos (to photo book printers, to family, to alternate services), the photo album interfaces typically have something like this:

Now I can share my photos with an unvetted startup by creating an insecure link. Even if my judgement is sound and the startup is trustworthy, others can still use that link if it leaks.

The situation is even worse with email data access. The only common workaround for email is for users to share their email passwords with 3rd party software. If I realize that’s terribly risky, I am left with almost no safe choices for 3rd party email management services.

Protecting users by taking away choice, while also allowing insecure alternatives, is the worst compromise. It’s time to help users make choices with their own data and also to protect them.

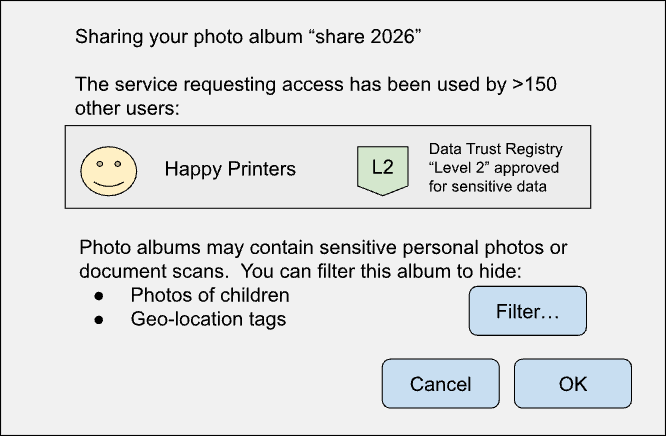

The UX for helping users safely make their own choices is yet to be designed. What would it look like? The user could be shown ratings and offered highly trusted destinations before making a riskier choice. The user could be allowed to decide if their own data is public, private but not very sensitive, or of the highest sensitivity. Companies hosting personal data could offer to filter content objects to help the user choose which content goes where.

Defaults are still important, but after being offered sensible defaults, users could apply their deeper knowledge and trade-off weights.

Let’s improve this whole situation. Over the last 20 years we’ve developed extensive systems and UX allowing users to make their own purchasing decisions online (verified brands, number of buyers, ratings and reviews). Modern online retail shows that this could be an empowering and smooth experience. We can make a lot of progress empowering users with their own data too.